Random Replication Leads to Definitive Data Loss

Lazy non-determinism

Disciplined determinism

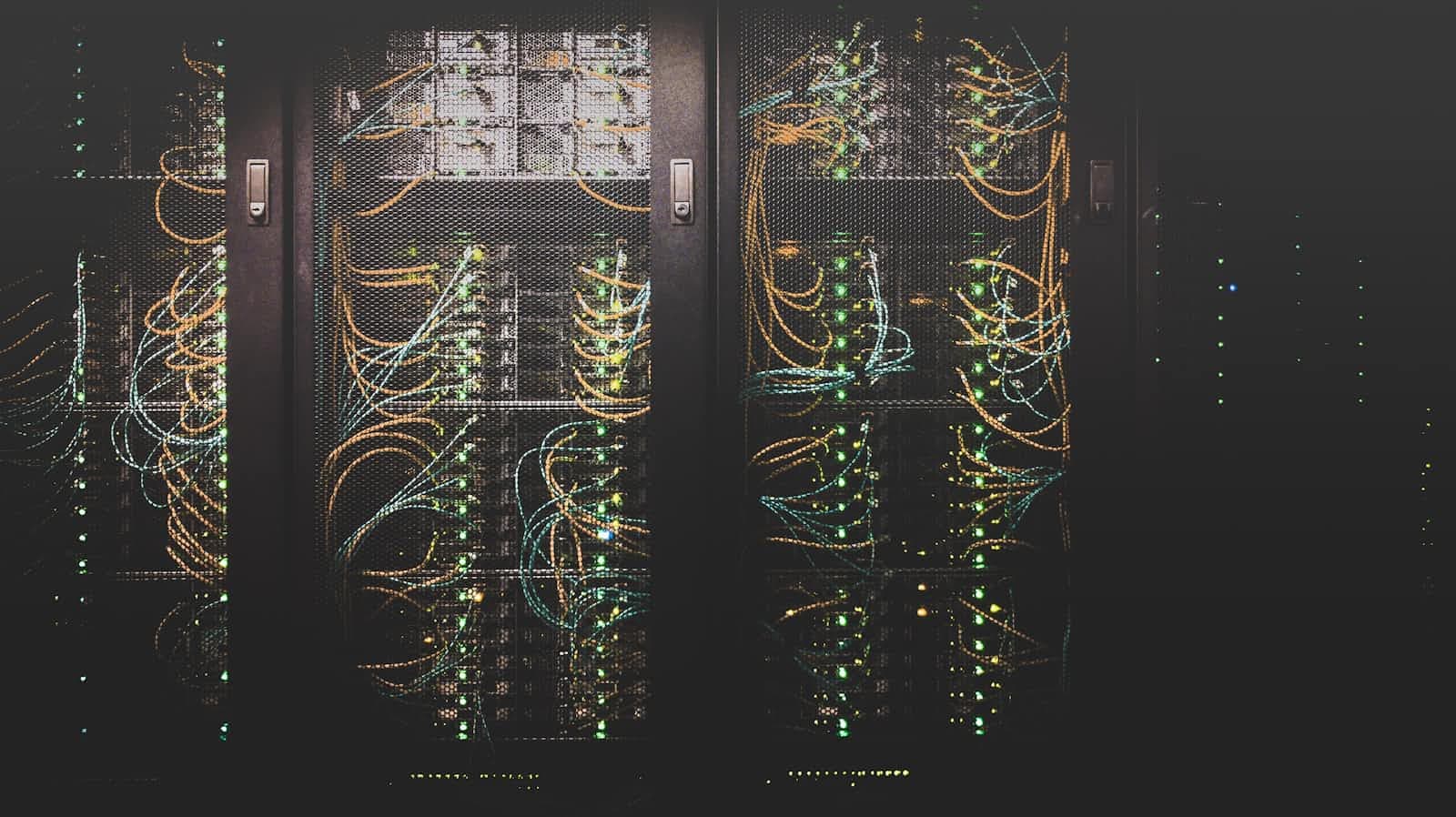

In the world of distributed systems, there is a common pattern of replication which is often used to prevent data loss (in reality, we mean data unavailability since usually data gets backed up to disk and disks almost never completely fail) in storage systems.

The pattern goes like this:

1.) Store a data chunk A on node 1

2.) Replicate chunk A to nodes 2 and 3 so that you can lose 2 of those nodes and still not lose data

3.) Repeat for all your data chunks, randomly choosing the node for each replica among all N nodes in your system

If you do this, you can lose any 2 nodes and be sure not to lose any data.

But as systems grow in size, node failure becomes more and more frequent in absolute terms.

If you have a cluster of 10000 nodes, there is a good chance that at any moment more than 2 nodes are failing.

But you can expect to have a small percentage of failures - says 1% - and that should protect you, since it's very unlikely that the 1% of nodes that fail at the same time contain all 3 replicas for any chunk of data.....right?

WRONG!

Yes, it's true that this is how replication is implemented in many popular storage systems such as HDFS and GFS - but the truth is that for large clusters, the probability of data loss becomes 100% over the course of 1 year.